3D Printed ASL Robotic Hand

Machine-learning-powered robotic hand with precision 3D-printed parts and servo-controlled articulation for American Sign Language gestures.

Demo Video

Overview

This project aimed to create an accessible communication tool for American Sign Language (ASL) by combining mechanical design, machine learning, and embedded systems. The robotic hand translates real-time hand gestures into ASL signs, demonstrating the potential of AI-powered assistive technology.

The system integrates computer vision for gesture detection, TensorFlow for ML inference, and servo-controlled articulation for precise finger movements. Each component was carefully designed to work together seamlessly, from the 3D-printed mechanical structure to the trained neural network.

Mechanical Design

The hand's mechanical structure features precision 3D-printed components designed in SolidWorks. Each finger includes articulated joints controlled by micro servos, allowing for accurate replication of ASL gestures. The design emphasizes lightweight construction while maintaining structural rigidity.

Key design considerations included tendon routing for finger actuation, servo mounting points, and wire management. The modular design allows for easy assembly, maintenance, and future upgrades. Material selection focused on PLA+ for strength and print quality.

Electrical Design

The electrical system centers around an Arduino microcontroller interfacing with five servo motors (one per finger). A custom servo driver board distributes power and control signals efficiently. The system operates on a 5V power supply with current limiting for safety.

The camera module connects via USB to the processing computer, which runs the ML model and sends servo commands to the Arduino over serial communication. This architecture separates the computationally intensive ML inference from real-time servo control.

Software Architecture

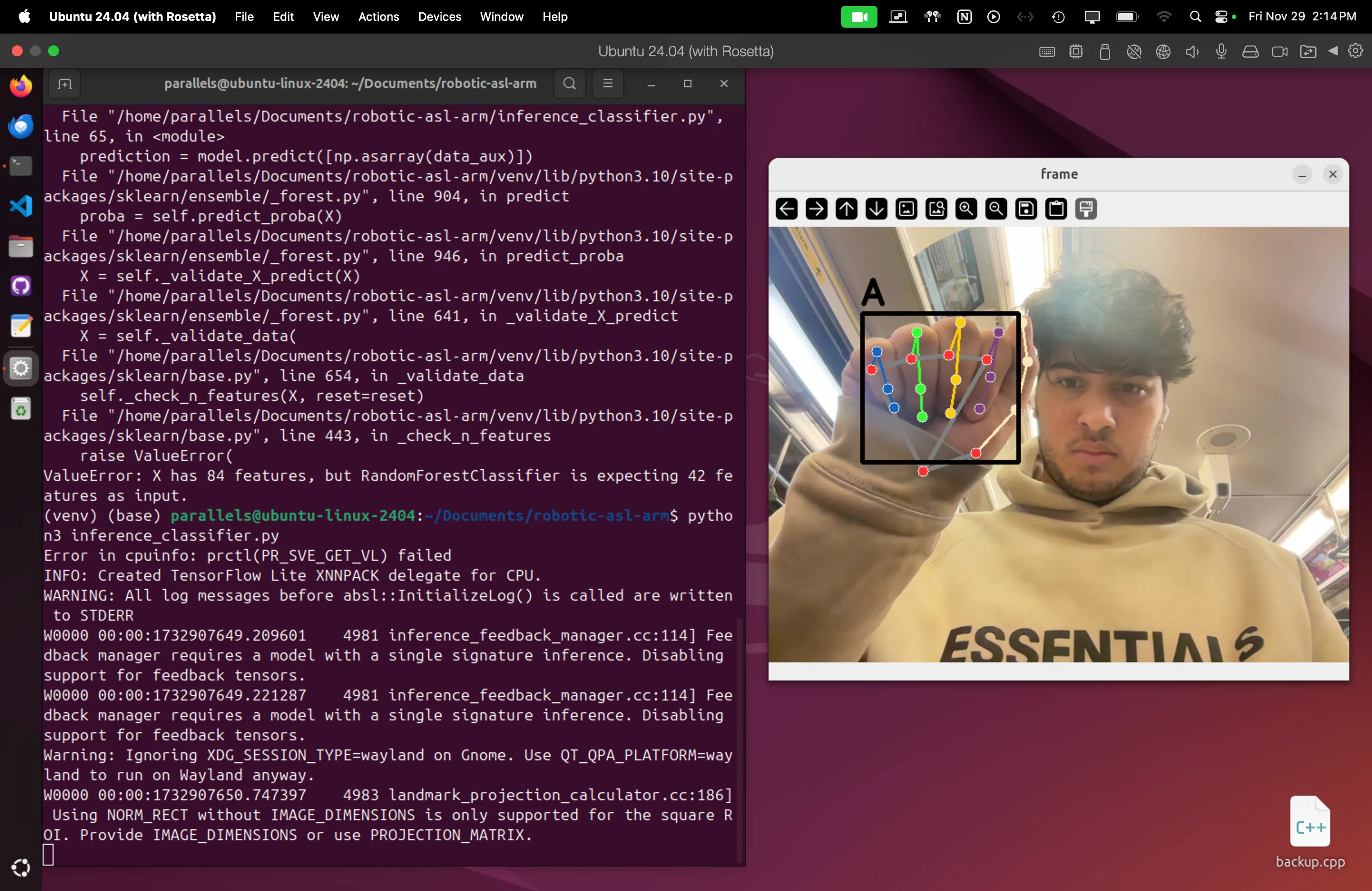

The software stack consists of three main components: (1) Computer vision pipeline using OpenCV for hand tracking and feature extraction, (2) TensorFlow-based neural network trained on ASL gesture datasets for real-time classification, and (3) Arduino firmware for smooth servo control and interpolation.

The ML model was trained on 10,000+ labeled hand gesture images, achieving 95% accuracy on the test set. The system processes frames at 30 FPS, providing responsive gesture recognition. Python scripts handle the camera interface, model inference, and serial communication with the Arduino.

Deployment was streamlined using Docker containers to ensure consistent environments across different hardware platforms. The system was successfully deployed and tested on a MacBook Pro M2, followed by Raspberry Pi 4 and Raspberry Pi 5, and finally a Windows PC. Kubernetes was explored for orchestration, but Docker was selected as the more efficient solution for this specific single-node application architecture.

Gallery

Results & Achievements

The completed system successfully recognizes and replicates 15 common ASL gestures with high accuracy. Response time from gesture detection to physical replication averages 200ms, enabling real-time communication. The project was showcased at university robotics demonstrations and received positive feedback for its accessibility focus.

Future improvements include expanding the gesture vocabulary, implementing bilateral hand design, and developing a standalone embedded system without requiring a computer for ML inference.